HackMD is the commercial version of CodiMD. HackMD supports CommonMark and other markup syntax, such as: You can import and export documents from Dropbox, Google Drive, and GitHub gists. HackMD provides a variety of document templates and allows users to push documents to GitHub. You can use HackMD to write notes with other people on your computer, tablet, or phone. It’s yet another instance of data independence.HackMD is a real-time, multi-platform collaborative Markdown editor. By the way, I really think that this notion of having prepared data that does not know how it’s going to be used is a powerful idea. It’s a good default mode when you’re not sure what dimensions are going to be used in the dashboard. You can use CUBE when you want to group on all the combinations of dimensions. Thankfully, in Snowflake there are a couple of operators to ease this process. It’s also slightly error-prone if you’re juggling with a lot of dimensions. Writing down a GROUPING SETS operator can be a bit tedious. It also speeds up our dashboards because the heavy-lifting has already been done. This is great for us, as it minimizes the amount of SQL we put in Metabase. It’s just a very readable SELECT FROM WHERE. SELECT * FROM groups WHERE group_by = 'week x industry' week SELECT * FROM groups WHERE week IS NOT NULL AND industry IS NOT NULL - After One way to do this is by checking on the nullity of the columns:

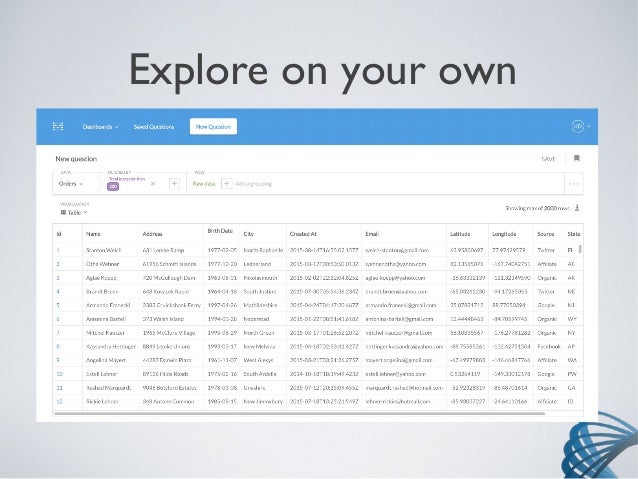

You can just filter the resulting table to look at the set of dimensions you’re interested in. The nice thing with GROUPING SETS is that all the computation has already been performed. This costs precious seconds as well as compute credits. Moreover, the desired aggregation has to be performed live. Ideally, dashboards allow choosing which dimensions to drill down on. A dashboard is very often just an interface to display metrics grouped by various dimensions. This is why the tidy data concept data is so powerful: the data is ready to be aggregated. A pattern for creating dashboardsĭata analysis very often boils down to looking at a metric, and drilling down to understand its distribution. That’s it really, the GROUPING SET operator simply performs and collates many GROUP BY in one fell swoop. In fact, you could just as well implement a GROUPING SET yourself by concatenating many GROUP BY query results together via some UNION operators. This is in fact the concatenation of three smaller tables: SELECT week, industry, SUM ( claims ) / SUM ( premiums ) AS loss_ratio FROM accounts WHERE country = '□□' GROUP BY GROUPING SETS ( ( week ), ( industry ), ( week, industry ) ) week Assuming we’ve collected these figures at a company level, this might result in such a tidy dataset: week We do this by comparing premiums, which is the money we receive from the people we cover, with claims, which are the healthcare expenses we reimburse. What GROUPING SETS doesĪs a health insurance company, it is key for us is to keep track of our margin. Before delving into said pattern, let us start by dwelling on what this operator does. This has unlocked quite a powerful pattern for us. We recently discovered that Snowflake has a GROUPING SETS operator. One of them is to prepare data in such a way that it can be consumed in a dashboard with minimal effort. We’re addressing this via various initiatives. We’ve agreed that the less SQL code is in Metabase, the better. Generally speaking, we wish to change our relationship with Metabase. Moreover, when we make a change to our prepared data, it’s burdensome to propagate the changes into our dashboards.

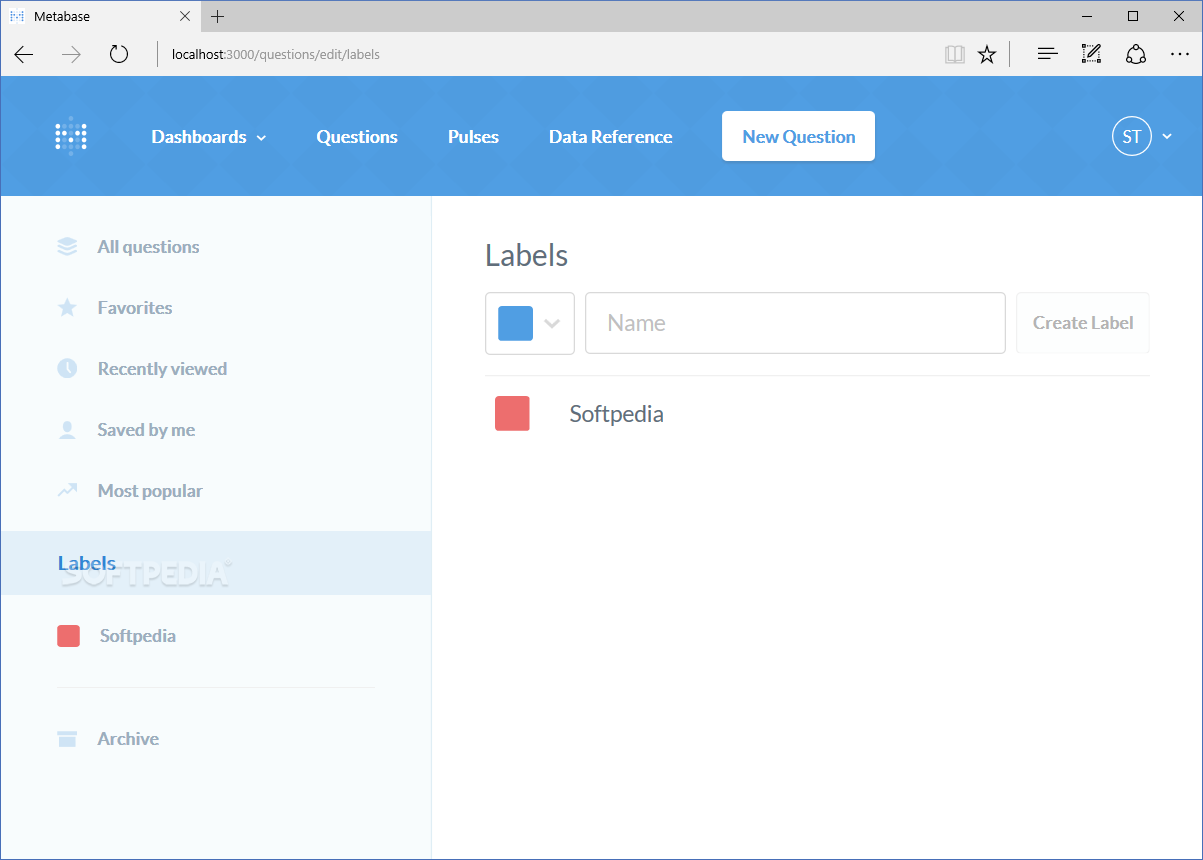

There is also business logic that is duplicated in many places across Metabase. One of the issues we’re facing is that we have a lot of business logic that is stored in Metabase, rather than being versioned in our analytics codebase. This gives us the liberty to do whatever we want between the data warehouse and the visualisation. Metabase allows querying the data warehouse with SQL. Recently, we’ve been having a lot of discussion around our setup. Our data analysis is done on top of our prepared data.

We transform this into prepared data via an in-house tool that resembles dbt. This includes dumps of our production database, third-party data, and health data from other actors in the health ecosystem. We load data into our warehouse with Airflow. Our data warehouse used to be PostgreSQL, and have since switched to Snowflake for performance reasons. At Alan, we do almost all our data analysis in SQL.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed